2017 has come to an end, and its time to reflect back on the year gone by, and look forward to what is in store for us, the cybersecurity professionals, in 2018.

To start with, lets look at some of the major security events/incidents of 2017. Following are five security and data breaches that made headlines all over the globe:

Equifax

This breach was publicly disclosed in September this year. This is a truly vast breach, as the data stolen included social security and driver’s license numbers of US Consumers, upto the tune of 143 million. Credit card numbers and other personally identifying information were also compromised for a smaller number of U.S. consumers. With this sensitive data now exposed, the operational impact of this on businesses is, that many organizations, including banks, that rely on the data to prove the identity of online users may need to implement additional, expensive and cumbersome authentication procedures.

Apparently, the attack vector was a simple one; the cyber criminals leveraged the critical remote code execution vulnerability CVE-2017-5638 on Apache Struts2. And ironically, this wasn’t a zero day, and the patch to this vulnerability, was available since March this year.

Yahoo

Although the attack occurred, or at least began in 2013, the same year when Target was also exposed to a cyber attack, it only came into light this year when parent company Verizon announced in October that every one of Yahoo’s 3 billion accounts was hacked in 2013. That’s more than three times the initial assessment done last year. In addition to the massive size of the attack, what is astonishing is the fact that it remained largely hidden for so many years. It makes me wonder, how many other huge attacks have occurred that we still don’t know about?

Uber

Last month, Uber CEO Dara Khosrowshahi revealed that two hackers broke into the company in late 2016 and stole personal data, including phone numbers, email addresses, and names, of 57 million Uber users. Among those, the hackers stole 600,000 driver’s license numbers of drivers for the company. Instead of disclosing the breach, as the law requires, Uber paid $100,000 to the hackers to conceal the fact that a breach had occurred. Why is this attack significant?

- The vast number of records compromised

- The fact that it was a ransomware attack; the most widely used attack vector in 2017

- The company paid the attackers (and thus encouraged the illegal industry), and,

- Nobody at such a large company disclosed the breach.

Shadow Brokers leak of NSA/CIA files

In 2013, a mysterious group of hackers that calls itself the Shadow Brokers stole a few disks full of National Security Agency secrets. Since the beginning of 2017, they’ve been dumping these secrets on the internet. They have publicly embarrassed the NSA and damaged its intelligence-gathering capabilities, while at the same time have put sophisticated cyber-weapons in the hands of anyone who wants them. The reason this hack is significant is because, with all this information now in the hands of cybercriminals, we are already seeing crimes committed by smaller organizations that used to be limited to well-funded, state sponsored attackers. The level of sophistication among attackers took a giant leap forward.

WannaCry

There has been enough said and written about WannaCry, which has turned out to be the most widely used attack vector by cyber criminals, this year. This ransomware plagued thousands in massive global cyberattacks. The widespread impact of WannaCry can be attributed to NSA losing control of its key hacking tools, to the Shadow Brokers group, which enabled hackers to install backdoors that distributed the ransomware to millions of computers.

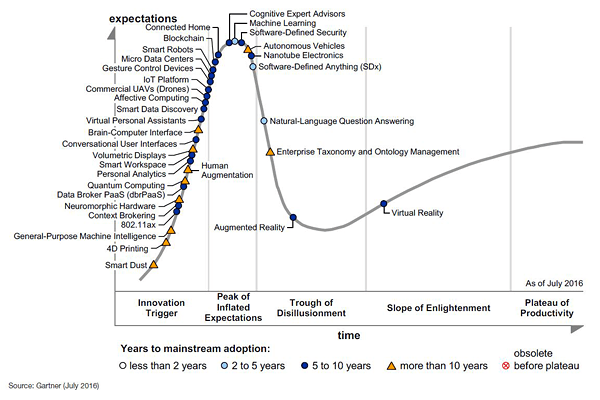

A key outcome and learning from these incidents has been, organisations shifting focus to incident / breach detection and response. And more importantly, the need for automation in these two areas, powered by Machine Learning techniques, has gained a lot of momentum.

And looking back through 2017, there has been significant progress, in the effective use of Machine Learning techniques in detecting and responding to cyber attacks. Following are some examples that demonstrate this.

I have broken down these examples into two categories / applications – Offensive side (the cybercriminal’s perspective) and the Defensive side (the security architect/incident analyst perspective)

Developments in Offensive security

Attackers have more actively, started leveraging machine learning to improve their attacks. There is not much evidence available of this use in the breaches I called out above. So I pick a few examples from the recently held BlackHat conference (US).

One BlackHat talk called “Bot vs Bot: Evading Machine Learning Malware Detection” explored how adversaries could use ML to figure out what other ML-based malware detection mechanisms were “looking” for. They could then create malware that avoided those things and thus evade detection. Another talk, “Wire Me Through Machine Learning” investigated how spammers might improve the success rate of their phishing campaigns by leveraging ML to improve their phishing emails.

At DEFCON, researchers shared how to “Weaponize machine learning (humanity is overrated anyway)”. They introduced a tool called DeepHack, an open source AI that hacks web applications. Meanwhile, ML was often an underlying subject in many other talks that weren’t directly about it. It’s clear cybersecurity researchers and attackers alike are leveraging ML & AI to speed up and improve their projects.

Developments in Defensive security

- Lets start with picking on the Equifax breach. As mentioned earlier, attackers used the Apache Struts Jakarta Multipart Parser Vulnerability – CVE-2017-5638 here. In this particular case, we could look at using various anomaly detection techniques. Some examples include, Suspicious Process/Service Activity Anomalies (For ex., Suspicious Process Activity Rare Process/MD5 For User/Host Anomaly), Suspicious Network Activity Anomalies (Suspicious Network Activity Traffic to Rare Domains Anomaly), Suspicious Web Server Tomcat Access Anomalies. These Anomaly detection rules can be a starting point in a machine learning based intrusion detection tool.

2. Detecting web-application attacks: Web applications are the primary target by cyber criminals as these applications are mostly exposed to the internet, and in many cases, as also seen in Equifax attack, are not effectively configured to prevent exploits of web application vulnerabilities. One of the most widely used web application attack is SQL injection. There are many methods of detecting it, without depending on signatures based systems, and just using machine learning algorithms. One such approach is described in detail, in this white paper. This method identifies SQL injection codes by their HTTP parameters’ attributes and a Bayesian classifier. Such methods are a lot more effective than using traditional web-application firewalls.

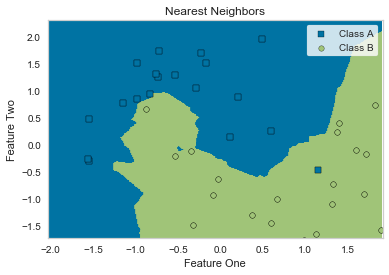

3. A deep learning approach to network intrusion detection: In the last 2 years, there have been many developments in the using of conventional machine learning algorithms, in building network intrusion detection systems (NIDS). These tools are basically developed as classifiers to differentiate the normal traffic from the anomalous traffic. These NIDSs perform a feature selection task to extract a subset of relevant features from the traffic dataset to enhance the result of the classification. These feature selection helps in the elimination of the possibility of incorrect training through the removal of redundant features and noise.

However, recently, deep learning based methods have been successfully applied in audio, image, and speech processing applications. These methods aim to learn a good feature representation from a large amount of unlabelled and unstructured data and subsequently apply these learned features on a limited amount of labeled data in the supervised classification. The labeled and unlabelled data may come from different distributions, however, they must be relevant to each other. Thus, combining signals from unlabelled and unstructured data, with the labelled and structured (logs) data, we will be able to significantly improve the possibilities of detecting an anomalous behaviour, and in turn a real cyber incident. Here is an interesting white-paper that describes one such system, in detail.

4. Detecting Wannacry using machine learning: Ransomware has exploded in the past two years, as software programs with names like Locky and Wannacry infect hosts in high-profile environments on a weekly basis. From power utilities to healthcare systems, ransomware indiscriminately encrypts all files on the victim’s computer and demands payments (usually in the form of cryptocurrency, like Bitcoin). Conventional techniques of detecting them always fail, as there are new variants to these malware being released on a daily and hourly basis. One potentially useful anti-ransomware tool, that uses machine learning, was one that was presented at Black Hat 2017 was ShieldFS, created and presented by a group of researchers from Politecnico di Milano and Trend Micro. The key to this technique is applying machine learning to operating-system-level file access patterns.

Implemented as a Windows filesystem filter, running in the kernel, ShieldFS isn’t a filesystem proper. Instead, it adds functionality to the underlying filesystem. As you would know, two most common challenges in machine learning are feature engineering (how to come up with a list of “features” about the input) and the feature selection itself (figuring out which of those features productively contribute to generating the correct answer). Feature engineering in ShieldFS seemed straightforward to me, since many of the features were simple counts of types of events the filter observed, such as directory listings and writes. They were also fortunate that so many of the features showed obvious qualitative differences between malicious (red) and benign (blue) programs, making feature selection also a high-confidence process.

Using binary inspection (called “static analysis”), they were able to supplement results based on operation statistics (“dynamic analysis”). The team implemented a multitiered machine learning model to preserve long-term trends but also be able to react to new behavioural patterns. By using a copy-on-write policy, if a process started to exhibit ransomware behavior, they could kill it and restore all the copies. This system detected ransomware with a 96.9% success rate, but even the other 3.1% of cases still had the original content stored, so 100% of encrypted files were able to be restored. This is unheard of, in the world of signature based malware detection tools.

How will 2018 turn out to be?

Based on the the cybersecurity events that we saw in 2017, following are some of the trends to watch out for, in 2018. Though not intended to be a comprehensive overview, the following are some of key areas in cyber security, that will undoubtedly shape the security conversations in 2018.

GDPR

Data privacy and data security have long been considered two separate missions with two separate and distinct objectives. But all that will change in 2018. With serious global regulations kicking into effect, especially in Europe, and with the regulatory responses to data breaches increasing, organizations will build new data management frameworks centered on controlling data – controlling who sees what data, in what state, and for what purpose. 2018 will prove that cybersecurity without privacy is a thing of the past.

Ransomware to continue to play

Ransomware will continue to represent the most dangerous threat to organizations and end-users. The number of new Ransomware families will continue to increase; authors will be more focused on mobile devices implementing new evasion techniques making these threats even more efficient and difficult to eradicate.

New ransom-as-a-service platforms will be available on the dark web making very easy to wannabe crooks to arrange their ransomware campaigns.

IoT, a privileged target of hackers

During 2017, botnets targeted over 122,000 IP cameras with DDoS attacks, and IoT attacks on wireless routers virtually shut down the internet for several hours in a day. Baby and pet monitors, medical devices, and dozens of other gadgets were hacked. Although we are a long way from securing the IoT, these incidents served as a wake-up call, and many organizations have added IoT security to their agendas and are talking seriously about securing it moving forward.

Critical infrastructure to include Social media too

Until recent past, Social media was only limited to being a fun way to communicate and stay up to date with friends, family and the latest viral videos. But along the way, as we started to also follow various influencers and use Facebook, Twitter and other platforms as curators for our news consumption, social media has become inextricably linked with how we experience and perceive our democracy.

The definition of critical infrastructure, previously limited to areas like power grids and sea ports, will likely expand to include internet social networks. While a downed social network will not prevent society from functioning, these websites have the ability to influence elections and shape public opinion generally, and also elections, thus making their security essential to preserving our democracy. And protecting them from cyberattacks, will become utmost necessary.

Standardised hacking techniques

In 2018, more threat actors will adopt plain-vanilla tool sets, designed to remove any tell-tale signs of their attacks. As mentioned earlier, this can also be attributed to the NSA and CIA toolkits now made available to rookies, thanks to Shadow Brokers.

For example, we will see backdoors sport fewer features and become more modular, creating smaller system footprints and making attribution, more difficult than ever.

And so, as accurate attribution becomes more challenging, the door is opened for even more ambitious cyberattacks and influence campaigns from both nation-states and cybercriminals alike.

Crypto currencies

The rapid and sustained increase in the value of some cryptocurrencies will push crooks in intensifying the fraudulent activities against virtual currency scheme.

Cyber criminals will continue to use malware to steal funds from victims’ computers or to deploy hidden mining tools on machines.

Another perspective is – Cryptocurrencies, including Bitcoin, Ethereum, Litecoin and Monero, maintain total market capital of over $1 billion, which makes them a more appealing target for hackers as their market value increases. Several hacks against Ethereum have temporarily dropped its value in the past few years. So, there are chances that, in 2018, a major hack against one of these cryptocurrencies will damage public confidence.

Artificial Intelligence as a double-edged sword

Across the board, more criminals will use AI and machine learning to conduct their crimes. Ransomware will be automatic – bank theft will be conducted by organized gangs using machine learning to conduct their attacks in more intelligent ways. Smaller groups of criminals will be able to cause greater damage by using these new technologies to breach companies and steal data.

At the same time, as I mentioned above, research and practical applications of Machine Learning and AI, in detecting and responding to cyber attacks, are improving month-over-month. So, large enterprises will turn to AI to detect and protect against new sophisticated threats. AI and machine learning will enable them to increase their detection rates and dramatically decrease the false alarms that can so easily lead to alert fatigue and failure to spot real threats by incident responders, thus resulting in significantly reduced MTTD (Mean Time to Detect) and MTTR (Mean Time to Respond).

Thanks for reading.

I shall look forward to your comments and point-of-views as well.

Title image courtesy: https://www.askideas.com