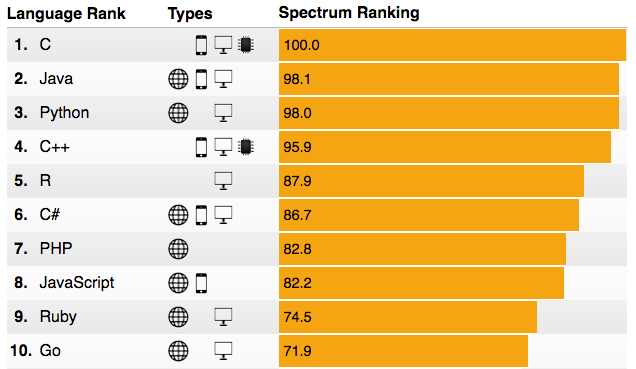

IEEE has published its annual Top Computer programming languages rankings report. It starts with the line “C is No. 1, but big data is still the big winner”, indicating the rise of R, the defacto programming language used in Big Data analytics, including Cyber Security domain.

I think this is an extraordinary result for a language which is domain-specific (big data and data science). If you compare R to the other four languages, which are general purpose languages (C, Java, Python amd C++) in Top 5, it’s a great feat, and is a clear indication of the adoption and heavy use and relevance of R in today’s Information Age where every device, system, or a “thing” (IoT) generates some form of data (logs). This also reflects the critical important of Data Science (where R is the defacto programming language used by Data Scientists), as a descipline today.

Some interesting lines from the report:

Another language that has continued to move up the rankings since 2014 is R, now in fifth place. R has been lifted in our rankings by racking up more questions on Stack Overflow—about 46 percent more since 2014. But even more important to R’s rise is that it is increasingly mentioned in scholarly research papers. The Spectrumd efault ranking is heavily weighted toward data from IEEE Xplore, which indexes millions of scholarly articles, standards, and books in the IEEE database. In our 2015 ranking there were a mere 39 papers talking about the language, whereas this year we logged 244 papers.

R’s steady growth in this and numerous other surveys and rankings over time reflects the growing importance of Data Science applied using R. And application of Data Science concepts in Cyber security, especially in detecting cyber attacks, is only becoming more and more relevant.

Using conventional security monitoring tools which use rule based detection engines (yes they are called SIEM!), to detect cyber attacks, is not working anymore. Let’s face it; SIEM has come off age. Using Machine learning approach to detect cyber attacks, has become one of the most important developments in the cyber security domain in the last 10 years. And its relevance in today’s world, where there is surplus amounts of data (also called “Big Data”) being churned out by all forms of computer systems, is at its peak. And R is playing a very important role in helping Security Data Scientists build “algorithmic models” that can detect better cyber attacks

So I am very excited and happy to see R’s popularity and adaption growing year on year.

This is a core area of study I am currently focusing on, and I will be writing more about this here on my blog, in the coming months.

Picture Courtesy: ieee.org